Building Automatic Fall Detection Systems with Computer Vision

Original Image Source by freepic.com

This article discusses using motion detection and pose estimation models for designing an automatic fall detection system for real-world applications.

💡 This is the early version of our work on a Fall Detection application for real-world use cases.

Update: You can now find our article on Human Action Recognition with computer vision to learn more about this area.

If you’re building or about to build AI/ML applications, you can:

Read this blog about data labeling methods, challenges, and solutions.

Also, visit this page to find and compare the top data labeling tools in the market.

Explore our blog landing for other works.

The Importance of Automatic Fall Detection Systems

Human falls are one of the leading causes of injury among seniors in the US and worldwide. Every second of every day, a +65 years-old adult suffers a fall in the US. These falls cause a variety of injuries, and many of them lead to death. According to UN reports, the population of people over 65 is going to double its number in the next 30 years, which raises the importance of designing automatic, affordable, and accessible fall detection systems. Based on these facts, automatic fall detection systems will have a rising interest during the coming years. Government and healthcare organizations have focused on building reliable fall detection systems in the past years. Various types of these systems have been designed and are currently being used with satisfying results. However, they are mainly in the form of “Wearable Devices” or “Placed Sensors,” which have their limitations for massive usage and monitoring.

But what if Falls could be automatically detected in a real-time manner with cheap and available devices like IP cameras? Then, a swift medical service could save many injured people and keep them from serious injuries.

Thanks to the prevalence of surveillance and IP cameras and the rich data they provide from numerous environments, we can now use the capabilities of Artificial Intelligence in this field. These cameras are already installed in hospitals, terminals, kindergartens, streets, and, most importantly, in elderly care centers. Therefore, computer vision-based fall detection algorithms might have a high impact on life quality and health assistance systems.

Galliot has a noticeable background in developing computer vision-based applications, especially Pose Estimation models. Since Pose Estimation techniques are critical for building robust Fall Detection solutions, we decided to use our experience to build a fall detection application.

This article will focus on computer vision-based solutions for fall detection. We try to explain a part of our work on developing a Fall Detection system using Pose Estimation and Motion Detection. We will walk through the highlights of what we did to develop an automatic real-time fall detection service.

Notice;

In this post, we do not aim to go through the details of the codes. It is just a high-level explanation of the algorithms alongside the pros and cons of each solution for designing a simple Fall Detection application.

Fall Detection Algorithm based on Pose Estimation

If we want to define or formulate a “Fall” in a scene, it would be a sudden change in the position (posture) of the body parts, for example, changing from a standing (vertical) to a lying (horizontal) position. Therefore, pose estimation can be a helpful technique to track and estimate the state of the body parts and use their changes to detect falls.

Choosing the Appropriate Pose Estimation Model for Automatic Fall Detection

Given our background in this field, we have lots of experience working with different 2D Pose Estimation models; thus, the following lines refer to 2D Pose Estimation. There are various models developed for Pose Estimation, which we can mention AlphaPose, OpenPifPaf, and OpenPose as the most important open-source ones. We have worked and optimized OpenPifPaf and AlphaPose to implement them in some of our applications.

Read more about our work on the OpenPifPaf Pose Estimation model.

You can also refer to the TinyPose article about our edge device-friendly Pose Estimation model using AlphaPose.

Based on our experience working with these models, we’ve decided to use the AlphaPose algorithm for extracting the body’s skeleton in this work. Choosing this model over others has been based on different reasons, such as its higher speed, relatively higher accuracy, available TensorRT version, etc.

How to detect Falls using a Pose Estimation model: (The Pipeline)

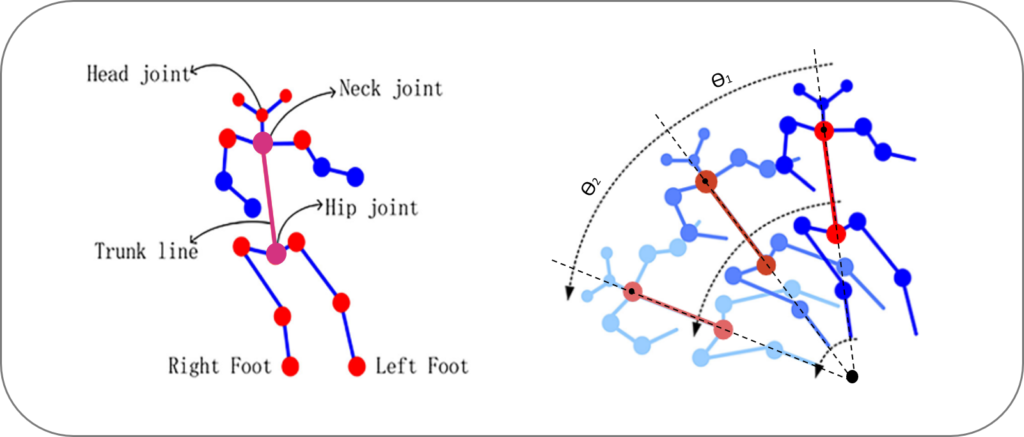

As we explained in our Human Pose Estimation article, pose estimators can detect and localize the body joints (head, shoulders, knee, hip, etc.) in each frame of an input video. So, how can we use this ability to detect falls; Clearly, we should refer to the more stable body points with minimal movements in typical postures other than falls. Logically, the backbone is one of the most rigid parts with the lowest changes in direction, angle, and movement in typical situations such as walking. Plus, while a fall happens, the backbone’s movement parameters quickly change, making it more desirable for fall detection with pose estimation.

Finally, to detect a fall using pose estimation algorithms, we will perform the following process: We first connect a hypothetical line between the Neck and Hip joints of a body, called the “Trunk Line.” Then, we try to track the changes in this trunk line angle in constant intervals. If these changes exceed our standard threshold in two consecutive intervals, we will consider the movement a fall.

You can see the results of our pose estimation-based fall detection model from two different views in the following videos.

Figure 3: Galliot Automatic Fall Detector based on Pose Estimation (Camera View 1 & 2)

Upsides and Downsides of Using Pose Estimation-based Fall Detection

Several parameters can affect our model’s performance in detecting a fall using pose estimation algorithms. The most important pros and cons of using this approach for detecting falls are as follows.

Advantages of pose estimation advantages:

This solution doesn’t require training a unique model and gathering numerous labeled data. We are using a pre-trained open-source model as the core of this engine, in which we define a rule to customize it for our use case. Moreover, some features such as real-time processing and compatibility with edge devices are other advantages of this approach. So, to recap, here are the most important pros to this method:

1- Available pre-trained models

2- No data labeling is required

3- Real-time processing and edge-device compatibility

Disadvantages of pose estimation-based fall detection approach:

1- Pose Estimation Recall

First, we better know that no pose estimator can detect the body key points in every environment and condition (e.g., different variants of lighting, camera angle, postures, etc.). But, we need a high “Recall” in our application, i.e., we must detect almost every single fall that happens. However, as we said, we can not rely on pose estimators alone for this work. As you can see in Figure 4, our pose estimator fails to detect the body joints (key points) and the trunk line due to the camera’s angle and environmental conditions.

2- Camera View and 2D Pose Estimation

Another weakness of this approach is due to our model’s 2D estimations. Since we are using 2D pose estimators, we are missing the changes in the trunk line that happen at certain angles to the frame, such as movements that happen parallel to the camera view vector (figure 5).

Consequently, due to the deficiencies of this approach in different situations, we can not entirely rely on this algorithm for automatically detecting falls. Hence, we require a more sophisticated approach that can cover the blind spots of this solution.

💡 How can we improve our pose estimator for detecting falls?

Most available pose estimation models are trained on public datasets, which generally contain images of people in normal positions like standing. To improve our pose estimation model for detecting body key points with higher accuracy, we can build an exclusive training set of situations when people lie down or fall. We will then fine-tune our pose estimation model using this new dataset to detect the key points in these situations with higher accuracy.

Fall Detection Algorithm based on Motion Detection

Considering the weaknesses of our pose estimation-based fall detection approach, we should develop another algorithm robust to the environment changes, different body gestures, and shortcomings in our previous solution. Plus, this algorithm should be able to work alongside our pose estimation model and cover its defects. Thus, we decided to use Motion Detection (Optical Flow) algorithms to supplement our previous model for detecting falls.

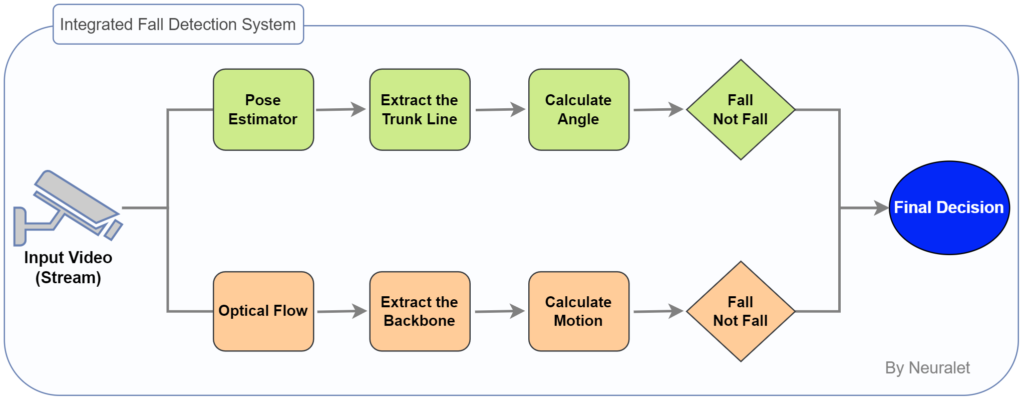

Our final system uses an integration of both Pose Estimation and Motion Detection-based systems to reach the highest Accuracy and Recall. We’ll explain the solution in the next section.

Why use Motion Detection alongside the Pose Estimation algorithm?

Motion Detection or classical Optical Flow algorithms are already available in most Computer Vision libraries such as OpenCV. They are easy to work with and use straightforward algorithms; thus, there is no need for training a model or gathering lots of datasets.

💡 Modern Optical Flow techniques use training and deep learning methods for better detecting the movements. Here you can read more about novel Optical Flow methods. You can also refer to this Nanonets blog post for a comprehensive explanation of the technique we used in this work.

No learning happens in classical Motion Detection (Optical Flow) methods; their algorithms only compare the corresponding pixels of two or more consecutive frames. The simplicity of this method makes it independent of the changes in the environment.

Motion detection is an entirely distinct approach compared to pose estimation. So, we can suppose that the motion detection-based fall detection method can work as a complementary algorithm along with the pose estimation for detecting falls.

How to detect falls using Motion Detection

Based on the facts we mentioned about the rigid body parts and the “Trunk Line,” we can take these parts as our region of interest and track their movements to detect falls using this method as well. To do this, we will use an Object Detector to identify the desired body parts and feed them to our Motion Detector algorithm for tracking the changes in them. Then, if we find an unusual/sudden change in the backbone position, we would consider it a fall.

💡 After feeding our image and the region of interest to the Motion Detection algorithm, it provides the direction (angle) and speed (intensity) of the movement in that region. We can then compare these parameters with our threshold to decide if a sudden change (Fall) has happened or not.

Downsides of using Motion Detection algorithms for detecting falls

Similar to the pose estimation-based fall detection approach, here we have some constraints that may cause false detection in our application. Just like pose estimation, we use a two-dimensional method for our estimates that may fail to detect some of the movements. In addition, Optical Flow methods are usually sensitive to noises, such as changes in image lighting, which can lead to false estimations.

Galliot’s end-to-end Solution to a Reliable Automatic Fall Detection

So far, we have explained two distinctive approaches to automatic fall detection with machine learning. As mentioned, each of these solutions has its own upsides and downsides. So, we recommend a system that benefits from integrating both of these solutions. Our solution proposes that both Pose Estimation-based and Motion Detection-based Fall Detection algorithms work individually (Figure 7). Then we will accumulate the results to make a more reliable final decision. In addition, we can achieve a high “Recall” in our application, using these two algorithms’ individual and merged results.

💡 How to distinguish “Falling” from “Lying Down”?

There are many intricacies in deciding if a movement is “Fall” or not. One of these twists is identifying if a person is lying or falling. The main difference is in the speed of movement and changes in the trunk line. So, we filter these movements using the speed of changes in trunk line angle to distinguish these two actions. Moreover, we can use an object detector to identify the objects such as beds, couches, etc., and help the Fall Detection algorithm decide if the movement is a fall or just lying on a bed.

Conclusion

Pose Estimation and Motion Detection are two solutions to Fall Detection using Machine Learning. In this article, we presented an overview of these methods with the upsides and downsides of each one. We have then explained our solution for building a more reliable Automatic Fall Detection System using both approaches.

We do not claim that our proposed algorithm is the best approach for a Vision-based Fall Detection algorithm. Here, we tried to simply use the tools we’ve employed in our previous works to create a simple fall detection application using machine learning algorithms that provides acceptable results.

Related Articles:

Human Action Recognition (HAR) with Computer Vision – Learn about human action recognition with deep learning, a subfield of computer vision that focuses on identifying specific actions performed by humans in videos or image sequences.

Is Machine Learning Always Good For Your Projects? – Explore whether machine learning (ML) suits your project, and learn about the factors to consider when deciding whether to incorporate ML into your software development process.

Data Labeling for Machine Learning Applications – Discover the different approaches to data labeling, the challenges of data labeling, and some of the popular tools available for data labeling in machine learning projects.

Top 25 Tools for Data Annotation and Labeling – Check out a comprehensive landscape of the top 25 data labeling tools for machine learning in 2023, including their features, pricing, and customer reviews.

An Introduction to Pose Estimation and Its Applications – Learn about human pose estimation with deep learning, a computer vision technique that involves identifying the pose of humans in images or videos.

Leave us a comment

Comments

Get Started

Have a question? Send us a message and we will respond as soon as possible.

Epic work. Interesting Article on fall detection

Thank you. Happy to hear that 🙂